Charlieplexing ( named after Charlie Allen ) is a great way to save pins on a microcontroller. Since each pin can be either high, low, or off ( 'high impedance' ), and LEDs conduct in only one direction, one can place two LEDs for for each unique pair of microcontroller pins. This also works with other devices, like buttons, but you can only place one for each pair of pins since they conduct in both directions*.

Charlieplexing ( named after Charlie Allen ) is a great way to save pins on a microcontroller. Since each pin can be either high, low, or off ( 'high impedance' ), and LEDs conduct in only one direction, one can place two LEDs for for each unique pair of microcontroller pins. This also works with other devices, like buttons, but you can only place one for each pair of pins since they conduct in both directions*.

However, a recent foray into designing with charlieplexing revealed a drawback: soldering a zillion discrete LEDs is time consuming and not for everyone. It is easier to use LED modules, which have LEDs already wired up, and are designed to be driven by multiplexing. For an N by M multiplexed grid you need N+M driving pins. However, for an N by M charlieplexed grid you need only K pins where K(K-1)=NM **. However, there is often a way to charlieplex LED matrices to save pins without increasing assembly difficulty.

Thinking about charlieplexed grids

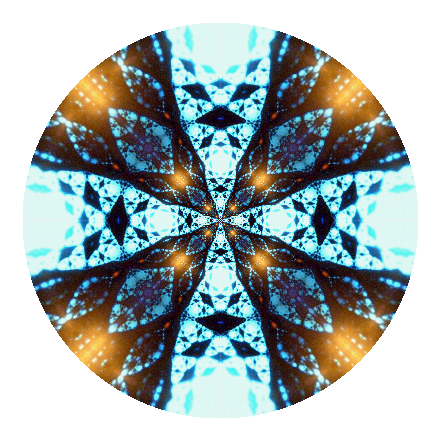

One might ask: how the hell am I supposed to keep track of all the possible combinations used in Charlieplexing? Since each pin can be either high (positive, or anode) or low (negative, or cathode), we can draw a K by K grid for K pins, where the cases where a pin acts as an anode are on one axis, and as a cathode, the other. Along the diagonal you have sites where a pin is supposed to act as both an anode and a cathode -- these are forbidden, and are blacked out. Here is an example grid for 16 pins:

Placing modules

I can now place components on this grid to fill it up. Say I have an 8x8 LED matrix with 8 cathodes and 8 anodes. All I have to do is find an 8x8 space large enough to hold it somewhere on the grid. For example, two 8x8 LED matrices fit into this 16 grid:

Another common size for LED matrices is 5x7. We can fit two of them on 12 pins like so:

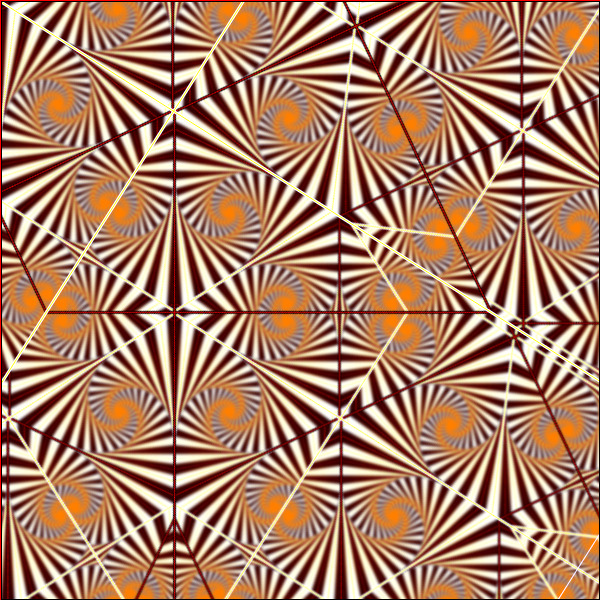

Now it gets fun. It's ok for components to wrap around the sides. We can fit four 5x7 ( for a 10x14 pixel game board perhaps? ) matrices on 16 pins like this:

We can fit six 5x7 matrices on 18 pins ( for a 10x21 pixel game board perhaps? Large enough for original tetris! ). Eight 5x7 matrices fit on 20 pins. 8x8 matrices are a little more clunky, but you can still fit 3 of them onto 20 pins or 4 of them onto 22 pins ( 22 pins also fits 10 5x7 arrays ). We leave these last three as exercises. ( solutions

1 2 3 4)

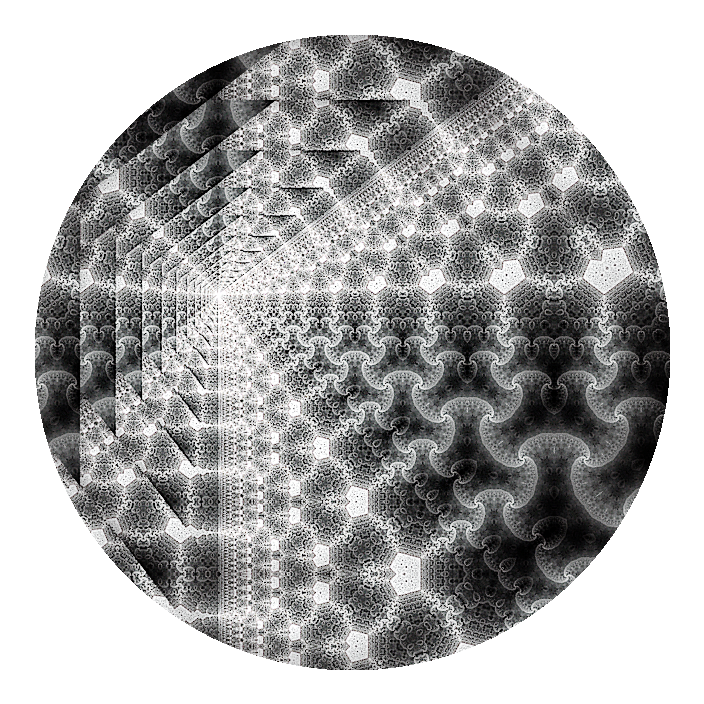

To demonstrate that this approach does in fact work, I rigged up a little

game of life on four 8x8 modules running on 22 pins on an AtMega328. After correcting for a problem with the brightness related to the PORTC pins sourcing less current, the display is quite functional -- the scanning is not visible and all lights are of equal brightness. I scan the lights one at a time, but only spend time on those that are on. (The variable frame rate is from the video processing -- the actual device is quite smooth)

Other packaged LED modules can be laid out similarly. 7 segment displays ( 8 with decimal point ) come packaged in "common cathode" and "common anode" configurations, which would be represented as a column of 8 cells, or a row of 8 cells, respectively. Often, four 7-segment displays ( 8 with decimal ) are packaged at once in a multiplexed manner -- these would be represented as a 4x8 or 8x4 block on our grid, depending on whether they were common anode or cathode. RGB LEDs also come packaged in common cathode and anode configurations. For example, here is how one could charlieplex 14 common anode RGB LEDs on 7 pins:

Hardware note: don't blow it up

When driving LEDs with multiplexing or charlieplexing, it is not uncommon to omit current limiting resistors. Since the grid is scanned, only a few LEDs will be on at once, and all LEDs spend most of their time off. If the supply voltage lies between the typical forward voltage, and the peak instantaneous voltage, we can figure out the largest acceptable duty cycle and enforce this in software. However, now one must ensure that software glitches do not cause the array scanning to stall, or that LEDs can survive a period of elevated forward voltage.

Microcontrollers will have a maximum safe current per IO pin. Sometimes, you can rely on the microcontroller to limit current to this level. Other times, attempting to force more than the maximum rating through a pin will damage the microcontroller. You can ensure that this never happens in software by never turning on more LEDs than a single IO pin can handle. Or, you can use tri-state drivers. If your microcontroller limits over-current, you can probably turn on as many LEDs as you want at once, but they will dim exponentially with the reduction in current per LED.

Combining devices

There is nothing stopping us from combining different types of LED modules, or LEDs and buttons, in our grid. However, buttons conduct in both the forward and backward direction, so they occupy both the anode-cathode and cathode-anode positions for any pair of pins. I represent this as a black and white pair of buttons in the grid drawing. For example, one could get an acceptable calculator with 6 display digits and 21 buttons onto 10 pins if you use a mix of common-cathode and common-anode 7-segment displays like so:

You could probably get a pretty decent mini-game using the space left over from Charlie-muxing four 5x7 modules on 16 pins. There is enough room to fit 17 buttons and 6 7-segment displays (shown as earth-tone strips below):

For the grand finale, we revisit the six 5x7 modules on 18 pins. Apart from giving us a grid large enough to hold classic Tetris, we also have room for 18 buttons, 6 7-segment displays (shown as earth-tone strips below), with 12 single-LED spots left over -- all on 18 pins. On an AtMega, this would leave 5 IO pins free -- enough room to fit a serial interface, piezo speaker, and crystal oscillator. Programming, however, would be a challenge.***

Hardware note: combining different LED colors in one grid

There are problems with combining different LEDs in one grid. If two LEDs with different forward voltages are placed on the same, say, cathode, then the one with the lower forward voltage can hog all the current, and the other LED won't light. I have found that ensuring in software that LEDs with mixed forward voltages are never illuminated simultaneously solves this problem.

Also ensure that your largest forward voltage is smaller than twice the lowest forward voltage. For example, if you try to drive a 3.6V white LED in a matrix that contains 1.8V red LEDs, the current may decide take a shortcut through two red LEDs rather than the white LED. However, it may be possible to ensure that there are no such paths by design. You must ensure that for every 3.6V forward path from pin A to B, there are no two 1.8V forward paths AC and CB for any C.

Driving software

Saving microcontroller pins and soldering time in well and good, but programming for these grids can be a real challenge! Here are some practices ( for AVR ) that I have found useful.

- Overclock the processor. Most AVRs are configured to 1MHz by default, but can run up to 8MHz even without an external crystal. The AVR fuse calculator is a godsend. Test the program first without overclocking, then raise the clock rate. Ensure that the power supply voltage is high enough for the selected clock rate. If things get dire and you need more speed, you can tweak the OSCCAL register as well.

- Prototype driver code on a device that can be removed and replaced if necessary. Repeated messing with the fuses to tweak the clock risks bricking the AVR. It's a shame when you have bricked AVR soldered in a TQFP package.

- Row-scan the grid. If this places too much current on the IO pins, break each row into smaller pieces that are safe. If too many LEDs are lit on a row and they appear dim, adjust the time the row is displayed to compensate.

- Store the LED state vector in the format that you will use to scan. Write set() and get() methods to access and manipulate this state that maps the structure of the charliplexing grid onto the logical structure of your displays. Scanning code is hard enough to get fast and correct without worrying about the abstract logical arrangement of the LED grid.

- Use a single timer interrupt to do all of the scanning. Having multiple recurring timer interrupts along with a main loop can create interesting interference and beat effects in the LED matrix that are hard to debug.

- If there are buttons and LEDs on the same grid, switch to polling the buttons every so often at a fixed interval, and write there state into volatile memory that other threads can query.

- If your display is sparse ( e.g. a game of life ) you can skip sections that aren't illuminated to get a higher effective refresh rate. If your display is very sparse, and you have a lot of memory to spare, you can even scan LEDs one at a time.

Conclusion

This document outlines how to drive many LED modules from a limited number of microcontroller pins. The savings in part cost and assembly time are offset by increased code complexity. These design practices would be useful for someone who enjoys coding puzzles, or gets a kick out of making microcontrollers do way more than they are supposed to. They could also be useful for reducing part costs and assembly time for mass produced devices, where the additional time in driver development is offset by the savings in production. I originally worked through these notes when considering how to build easy-to-assemble LED marquee kits, but as I have no means to produce such kits, nor easy mechanism for selling them, I am leaving the notes up here for general benefit.

_______________________________________________

*If you place a diode in series with a button you can place two buttons for each unique pair of pins. One can make this diode a LED to create a button-press indicator.

**For those interested, K*(K-1)=N*M solves to K = ceil[ ( 1 + sqrt( 1+4NM ) ) / 2]

***I've tried this with an 8x16 grid using a maximally overclocked AtMega. It is tricky. To avoid beat effects, sound, display, and button polling are handled with the same timer interrupt. The music is intentionally restricted to notes that can be rendered with low clock resolution. Some day i may even write this up.

_______________________________________________

Driving software example: Game of Life on a 16x16 grid

Due to popular demand I've gone through and commented the source code for the game of life demo. There are probably some things that I could have done better, but hopefully it will be a good place to start.

/*

Game of Life charliplexing 4 8x8 LED Matrix demo.

Designed for AtMega

_______________________________________________________________________________

# to compile and upload using avr-gcc and avrdude:

# compile

avr-gcc -Os -mmcu=atmega328p ./display.c -o a.o

# grab the text and data sections and pack them into a binary

avr-objcopy -j .text -j .data -O binary a.o a.bin

# check that the binary is small enough to fit!

du -b ./a.bin

# upload using the avr ISP MKII. In this case,

# it is located at /dev/ttyUSB1, but you would change that argument

# to reflect whichever device your programmer has been mounted as

avrdude -c avrispmkII -p m328p -B20 -P /dev/ttyUSB1 -U flash:w:a.bin

Hardware notes

===============================================================================

DDRx : 1 = output, 0 = input

PORTx : output buffer

PINx : digital input buffer ( writes set pullups )

______

!RESET PC6 -| U |- PC5

PD0 -| |- PC4

PD1 -| |- PC3

PD2 -| |- PC2

PD3 -| m |- PC1

PD4 -| * |- PC0

VCC -| 8 |- GND

GND -| |- AREF

PB6 -| |- AVCC

PB7 -| |- PB5 SCK ( yellow )

PD5 -| |- PB4 MISO ( green )

PD6 -| |- PB3 MOSI ( blue )

PD7 -| |- PB2

PB0 -|_____|- PB1

Programmer pinout, 6 pin:

6 MISO +-+ VCC 3

5 SCK + + MOSI 2

4 RST +-+ GND 1

Programmer pinout, 6 pin, linear:

6 MISO +

5 SCK +

4 RST +

VCC 3 +

MOSI 2 +

GND 1 +

Programmer pinout, 10 pin:

3 vcc +-+ MOSI 2

+ +

+ +] RST 4

+ + SCK 5

1 gnd +-+ MISO 6

PORT : write to here to set output

DDR : write to here to set IO. 1 for output.

PIN : digital input

thanks to http://brownsofa.org/blog/archives/215 for explaining timer interrupts

*/

// avr io gives us common pin definitions,

// and avr interrupt gives us definitions for setting interrupts.

// ( we will use one timer interrupt to update the display )

#include <avr/io.h>

#include <avr/interrupt.h>

// Basic definitions. Our display is 16 pixels wide and 16 pixels high

// This is set in N

// There are 16*16=256 lights total, this is set in NN

// We also use NOP to control timing sometimes, we use an assembly wrapper

// reflected in the macro "NOP"

#define N 16

#define NN ((N)*(N))

#define NOP __asm__("nop\n\t")

// I use two different sets of buffers for display data. Part of this is

// Laziness -- it does consume a lot of memory and wouldn't fly on say an

// AtMega8. But it makes the code a little easier to understand.

// First, I store the display pixels in a "raster-life" format. Here,

// Each rown of the display is stored in a sequence of integers, and

// If a pixel is on, then the bit corresponding to that pixel is set to 1,

// Otherwise 0.

// These displays are used to run the game of life logic because it is

// straightforward to read and write pixel data.

// We use 16 bit integers because this makes more sense for a 16x16 array

// But this is an abstraction and 8 or 32 bit integers would work just as well.

// We use a double buffering strategy. So, we declare two buffers, and then

// also make two indirect pointers to these buffers. When we want to update

// the display state, we write into the "buff" pointer, while reading the

// previous game state from the "disp" pointer. Then, when we are ready to

// show the next frame, these pointers will be flipped.

#define BUFFLEN 16

uint16_t b0[BUFFLEN];

uint16_t b1[BUFFLEN];

uint16_t *buff=&b0[0];

uint16_t *disp=&b1[0];

// To actually scan the display, I use a sparse format. When we scan the lights

// we turn them on one at a time. This can make the display very dim if we

// have to turn on all of the lights. However, for something like the Game of

// Life, only a few lights are ever on at the same time. If we only worry

// about scanning the lights that are on, then our display is much brighter.

// However, it is important that the code that scans the display is very

// fast, so that we can run it thousands of times per second so that there is

// no visible flicker to the human eye. So, we prepare a list of which lights

// are on ahead of time. Then, the display scanning code only has to refer to

// this list, which is rapid.

// We use a double buffering strategy here. The active list of lights that

// are on is stored in the "lightList" variable. This is the one that the

// display scanning code acually uses. When we want to prepare a new list,

// we write it into the lightBuff variable, then flip it once it is ready.

// I am somewhat wasteful here and allocate enough space to store two lists

// that might contain all 256 LEDs. I suspect there are ways to use less

// space by being clever, but I can't think of anything at the moment.

uint8_t ll0[NN],ll1[NN];

volatile uint8_t *lightList = &ll0[0];

volatile uint8_t *lightBuff = &ll1[0];

volatile uint16_t lighted = 0;

// This is a simple random number generator. It is not very good, and will

// produce the same sequence of numbers each time the AtMega is turned on,

// but it will siffice for this demonstration.

uint32_t rng = 6719;

uint8_t rintn()

{

rng = ((uint64_t)rng * 279470273UL) % 4294967291UL;

return rng&0xff;

}

// To simplify changing the pin states of the AtMega, I group the three ports

// PORTB PORTC and PORTD into one logical port and then set all three with

// one function call.

void setDDR(uint32_t ddr)

{

DDRB = ddr & 0xff;

ddr >>= 8;

DDRD = ddr & 0xff;

ddr >>= 8;

DDRC = ddr & 0xff;

}

void setPort(uint32_t pins)

{

PORTB = pins & 0xff;

pins >>= 8;

PORTD = pins & 0xff;

pins >>= 8;

PORTC = pins & 0xff;

}

// Spin around NOP for a while -- a quick and lazy way to control timing

void delay(uint32_t n) { while (n--) NOP;}

// Read and write functions for the display data.

// This abstracts away the bit packing and unpacking.

// First argument: one of the display buffers ( either disp or buff )

// Second argument: the pixel index. We use row-major indexing, so

// for example, the first row will count up indecies 0..15, then

// the second row will start at index 16 .. 31 and so on and so forth.

// For the set method, the third argument is 0 or 1 -- 1 for "on" and 0 for

// "off"

uint8_t get(uint16_t *b,uint16_t i) { return (b[i>>4]>>(i&15))&1;}

uint8_t set(uint16_t *b,uint16_t i,uint8_t v) { if (get(b,i)!=v) b[i>>4]^=1<<(i&15);}

// The game of life wraps around at the edges. To abstract away some of the

// difficulty, these previous and next functions will return the previous

// or next row or column, automatically handlign wrapping around the edges.

// For example, the next column after column 15 is column 0. The previous

// row to row 0 is row 15.

uint8_t prev(uint8_t x) { return (x>0?x:N)-1; }

uint8_t next(uint8_t x) { return x<N-1?x+1:0; }

// These are parameters to configure the Game of Life. Sometimes the game

// gets stuck. To poke it, I randomly drop in some primative shapes.

// I have stored these shapes in a bit-packed representation here.

// Also, the TIMEOUT variable tells us how long to let the game stay "stuck"

// before we try to add a new life-form to it. The variable shownFor counts

// how many frames the game has been stuck.

#define glider 0xCE

#define genesis 0x5E

#define bomb 0x5D

#define TIMEOUT 7

uint8_t shownFor;

// These are some macros to make coding the Game of Life a little easier.

// When we update the game, we are always reading from the current state,

// which is stored in the "disp" display buffer, and we are always

// writing the next state into the "buff" display buffer. So, we can

// simplify the Get and Set functions for readability.

#define getLifeRaster(i) get(disp,i)

#define setLifeRaster(i,v) set(buff,i,(v))

// We store the display data in a bit-vector which is row-major ordered.

// The bit vector is indexed by a single number from 0 to 255, but we want

// to think about the Game of Life in terms of rows and columns. So,

// this macro just wraps conversion of row and column numbers into an index

// for readability

#define rc2i(r,c) ((r)*N+(c))

// Finally, combine the index macro with the get and set macros to

// create macros for reading and writing game state based on the row and

// column numbers.

#define getLife(r,c) getLifeRaster(rc2i(r,c))

#define setLife(r,c,v) setLifeRaster(rc2i(r,c),v)

// One step in the Game of Life is to count the number of neighbors that

// are "on". This function computes part of that: it counts the number of

// neighbors that are "on" at the current position (r,c) and also the

// row above and below. This is not a Macro because the Game of Life

// implementation here was ported from an implementation with much less

// flash memory. When you make a macro, that code is expanded with simple

// text substitution before it even gets to the compiler. This means that

// each time we "call" a macro a bunch of code gets included. Somtimes this

// can be optimized out, but not always. By making this a function, we help

// the compiler understand that each "call" can be computed using the same

// code, and this resulted in a smaller binary. TLDR: esoteric design choice

// for size optimization, IGNORE.

uint8_t columnCount(r,c) {

return getLife(prev(r),c) + getLife(r,c) + getLife(next(r),c);

}

// We store the game state in raster-like buffers of pixel data, but we

// scan the display one light at a time based on a list of which lights are

// on. This function converts from the raster-like representation to the

// list reprentation. Once we have prepared a new frame of the game, we call

// this function to create a new list of "on" lights for the display scanning

// code. We also flip the pixel and light-list buffers here.

void flipBuffers()

{

// flip the pixel buffers

uint16_t *temp = buff;

buff = disp;

disp = temp;

// scan the new pixel buffer for lit pixels and add them to the list

// of lighted pixels for sparse scanning

uint16_t r,c,i;

uint16_t lightCount = 0;

for (i=0;i<NN;i++)

{

if (get(disp,i))

{

lightBuff[lightCount]=i;

lightList[lightCount]=i;

lightCount++;

}

}

//swap the sparse scanning data buffers

lighted = lighted<lightCount?lighted:lightCount;

volatile uint8_t *ltemp = lightBuff;

lightBuff = lightList;

lightList = ltemp;

lighted = lightCount;

}

// pin definitions. We have wired up four different 8x8 LED matrices to

// The AtMega. I have kept the anodes and cathodes contiguous, so that

// For each array, the anodes and the cathodes use a sequential number of

// Pins. The way I have set up my logical pin numbers, Pins 0..7 are PORTB

// Pins 8..15 are PORTD, and then the rest are PORTC. These arrays store

// The anode or cathode sequence starts for each of the 4 matrices.

const uint8_t cmap[4] = { 21,9, 7,17};

const uint8_t amap[4] = { 12,1,15, 4};

// I didn't know which anode/cathode was which when I wired up the arrays.

// LED matrix pinouts can be idiosyncratic like that. However, I did wire up

// each of the arrays in a consistent manner. So, I can fix this by applying

// a permutation in software. These arrays store the pin permuations for

// the anodes and cathodes.

const uint8_t aperm[N] = {0,2,3,1,7,4,6,5};

const uint8_t cperm[N] = {4,3,1,5,0,6,7,2};

// This function handles the display scanning -- it is called from a timer

// interrupt. The variable scanI keeps track of which light we're currently

// displaying. The variable PWM keeps track of state so that we can turn some

// lights on longer than others. Each time a timer interrupt occurs, this

// code changes which light is displayed. Lights are only shown one at a time.

// The timer interrupt changes them fast enough that they appear to be all

// Illuminated simultaneously.

volatile uint8_t scanI = 0;

volatile uint8_t pwm = 0;

ISR(TIMER0_COMPA_vect)

{

// PWM would allow us to vary the brightness by leaving some lights

// on longer. Here, I just use it to correct for a problem with PORTC.

// For some reason LEDs on PORTC are more dim than they should be. This

// is probably because I am driving the LEDs without current limiting

// resistors, and so LEDs on other ports are drawing more current than

// I expected ( more than 40mA ). Thus, these LEDs are brighter than the

// ones driven using PORTC. It may have something to do with PORTC also

// being capable of analog input? Anyway, its unclear if this is bad but

// this little PWM script seems to fix it. Also, I intentionally waste

// CPU cycles using a delay loop so that the PWM branch takes as long

// as the light-scanning branch -- this is so that the fraction of time

// taken by the display scanning interrupt routine is roughtly constant.

// This is important because if I returned early from the PWM branch,

// The extra CPU cycles would be used by the game logic and the game

// frame rate would vary. A more correct way to do this is to wait after

// each frame of the game of life has been computer, based on timer

// interrupts. But just adding the delay here was easier for me.

if (pwm)

{

pwm--;

delay(110);

return;

}

// We advance to the next light. If for some reason all of the lights are

// off we return immediately. Technically, there are race conditions

// with the flipBuffers() function, but the errors are not catestrophic

// We only focus on lights that are on, and keep track of how many lights

// are on in the "ligted" variable.

scanI++;

if (!lighted) return;

scanI%=lighted;

// We look up which light should be on, which is stored in the lightList.

// The lower 4 bits contain the column index, and the upper four bits

// contain the row index. Our display consists of 4 8x8 arrays. These are

// laid out with a charlieplexing pattern. So, we do a test to see which

// array our light at row, columb (r,c) should be in -- that is what the

// variable 'b' stored. Then we can lookup which anode and cathode we

// should use to turn the light on, now that we know which array to use.

uint8_t light = lightList[scanI];

uint8_t r = light>>4;

uint8_t c = light&0x0f;

uint8_t b = (r<8?0:1)+(c<8?0:2);

// Looking up which anode and cathode to use seems strange at first.

// For each array, I've wired up the anodes and cathodes to be contiguous

// So that, for example, the fourth array, the cathodes will start at

// logical pin 17, and proceed to logical pin 25. Except there are only

// 21 pins, so it wraps around to the beginnign again rather than counting

// Up to 24. So, we get the starting in, an count up from there, and use

// Modulo to wrap around to the beginning again.

// Finally, I did not know which cathode or anode was which when I wired

// up the arrays -- LED matrix pinouts can be rather idiosyncratic like

// that. So! The anodes and cathods were all out of order. So I have to

// look up a permutation to get the right one for our specific row and

// column.

uint8_t an = (amap[b]+aperm[r%8]+21)%22;

uint8_t ct = (cmap[b]+cperm[c%8]+21)%22;

// Now that we know which pins to use to turn on the light, we can

// actually set the pin state to achieve this. First we turn all the

// pins to high impedence ("Off" or "input mode"). This is because, to

// set the right pin, we have to changes pins in PORTA, PORTB, and PORTC.

// As far as I know, there is no way to change all three of these

// simultanously -- you have to do them one at a time. This means that

// in the process of changing the pin states we might accidentally turn on

// some lights we didn't mean to. To work around this, first turn off all

// the pins, then set the pin state, then turn back on only the pins that

// we need to use

setDDR(0);

setPort(1UL<<an);

setDDR((1UL<<an)|(1UL<<ct));

// If we are driving the anodes using PORTC, we use a brightness correction

if (an>=16) pwm = 1;

}

int main()

{

// I happen to be using the internal RC oscillator, which has an unknown

// frequency. Setting OSCCAL to 0xff means "run the internal RC oscillator

// "FAST". OSCCAL is supposed to be used for calirating the RC oscillator

// so that it runs at a known frequency, but here I just max out the

// calibration variable. Another way to do this is to change the fuses so

// that // the RC oscillator runs faster -- but this works too.

OSCCAL=0xFF;

// I want to configure a timer interrupt to chan the display.

// I determined these values using the AtMega datasheet, and don't exactly

// remember what they all mean.

// I know that we turn on timer interrupt 0 and set the prescaler

// (in TCCR0B) to something -- probably "as fast as you can".

// Then, we set it to "compare to counter" mode. This means that the

// counter will incremement, and when it gets to OCR0A ( set here to 180 )

// it will call our display scanning code. This lets you tweak how often

// the display is scanned. If it too slow, you will see flickering. If

// it is too fast, there will not be enough CPU cycles left over to run

// the game logic.

TIMSK0 = 2; // Timer CompA interupt 1

TCCR0B = 2; // speed

TCCR0A = 2; // CTC mode

OCR0A = 180;// period

// Initialize the game to a random state, and update the lightList to

// reflect this.

uint8_t r,c;

for (r=0;r<16;r++) buff[r] = rintn();

flipBuffers();

sei();

// Run the Game of Life

// There are some tedious optimizations here.

// To compute the next frame in the Game of Life, one has to sum up

// the numbr of "live" or "on" neighbors around each cell.

// To optimize this, we keep a running count.

// When we want to count the number of "live" cells at position (r,c),

// We look at how many cells were alive around position (r,c-1). Then, we

// Subtract the cells from column c-2 and add the cells from column c+1.

// One could also keep a running count along the columns, but this

// would require more intermediate state and the additional memory lookups

// consume the benefit in this application.

// As we update the game, we also keep track of whetehr a cell changes.

// If cells are not changing, we make note of this, and if the game stays

// stuck for a bit, then we add in new life-forms to keep things

// interesting.

// One neat thing about double-buffering the game state is that the

// state from TWO frames ago is available in the output buffer, at least,

// until we write the next frame over it. So we can take advantage of this

// and also detect when the game gets stuck in a 2-cycle.

while (1)

{

uint8_t changed = 0;

uint8_t k=0;

for (r=0; r<N; r++)

{

uint8_t previous = columnCount(r,N-1);

uint8_t current = columnCount(r,0);

uint8_t neighbor = previous+current;

for (c=0; c<N; c++)

{

uint8_t cell = getLife(r,c);

uint8_t upcoming = columnCount(r,next(c));

neighbor += upcoming;

uint8_t new = cell? (neighbor+1>>1)==2:neighbor==3;

neighbor -= previous;

previous = current ;

current = upcoming;

changed |= new!=cell && new!=get(buff,rc2i(r,c));

k += new;

setLife(r,c,new);

}

}

uint8_t l=0;

if (!(k&&rintn())) l=genesis;

if (!changed && shownFor++>TIMEOUT) l=genesis;

if (l) {

uint8_t r = rintn();

uint8_t q = rintn();

uint8_t a = rintn()&1;

uint8_t b = rintn()&1;

uint8_t i,j;

for (i=0;i<3;i++)

{

uint8_t c = q;

for (j=0;j<3;j++)

{

setLife(r,c,(l&0x80)>>7);

l <<= 1;

c = a?next(c):prev(c);

}

r = b?next(r):prev(r);

}

shownFor = 0;

}

flipBuffers();

}

}

The recent revelations of a set of massive and longstanding NSA surveillance programs have prompted blizzard of accusations, defenses, and recriminations from across the political spectrum, PRISM and related programs have been called everything from “all the infrastructure a tyrant could need” to a vital component of national security, while leaker Edward Snowden has been called everything from a hero to a traitor. A week later, the pieces have yet to settle. But what’s been bothering me in all this is the confusion of ideas about state power, civil liberties, surveillance, computer security, and privacy. It bothers me, because without clear ideas we cannot have clear policies, and without clear policies liberty, security, and any other desired public good are achieved by accident.

The recent revelations of a set of massive and longstanding NSA surveillance programs have prompted blizzard of accusations, defenses, and recriminations from across the political spectrum, PRISM and related programs have been called everything from “all the infrastructure a tyrant could need” to a vital component of national security, while leaker Edward Snowden has been called everything from a hero to a traitor. A week later, the pieces have yet to settle. But what’s been bothering me in all this is the confusion of ideas about state power, civil liberties, surveillance, computer security, and privacy. It bothers me, because without clear ideas we cannot have clear policies, and without clear policies liberty, security, and any other desired public good are achieved by accident. ( thought this was pretty awesome )

( thought this was pretty awesome ) Google's I/O 2013 conference was yesterday, and the tech journalistic consensus is trickling in. In terms of specific product launches, there's nothing with the "wow" factor of last year's Google Glass, but according

Google's I/O 2013 conference was yesterday, and the tech journalistic consensus is trickling in. In terms of specific product launches, there's nothing with the "wow" factor of last year's Google Glass, but according

_______________________________________________

_______________________________________________